Gallery

Path of Titans mods

Mods in pages/paleo-presentation/mods/ — click a tile to open full size.

Instinct-Driven Dinosaur Agents · Path of Titans

Right after the architecture overview on this deck — open the live HTML tabs (new tab keeps the presentation).

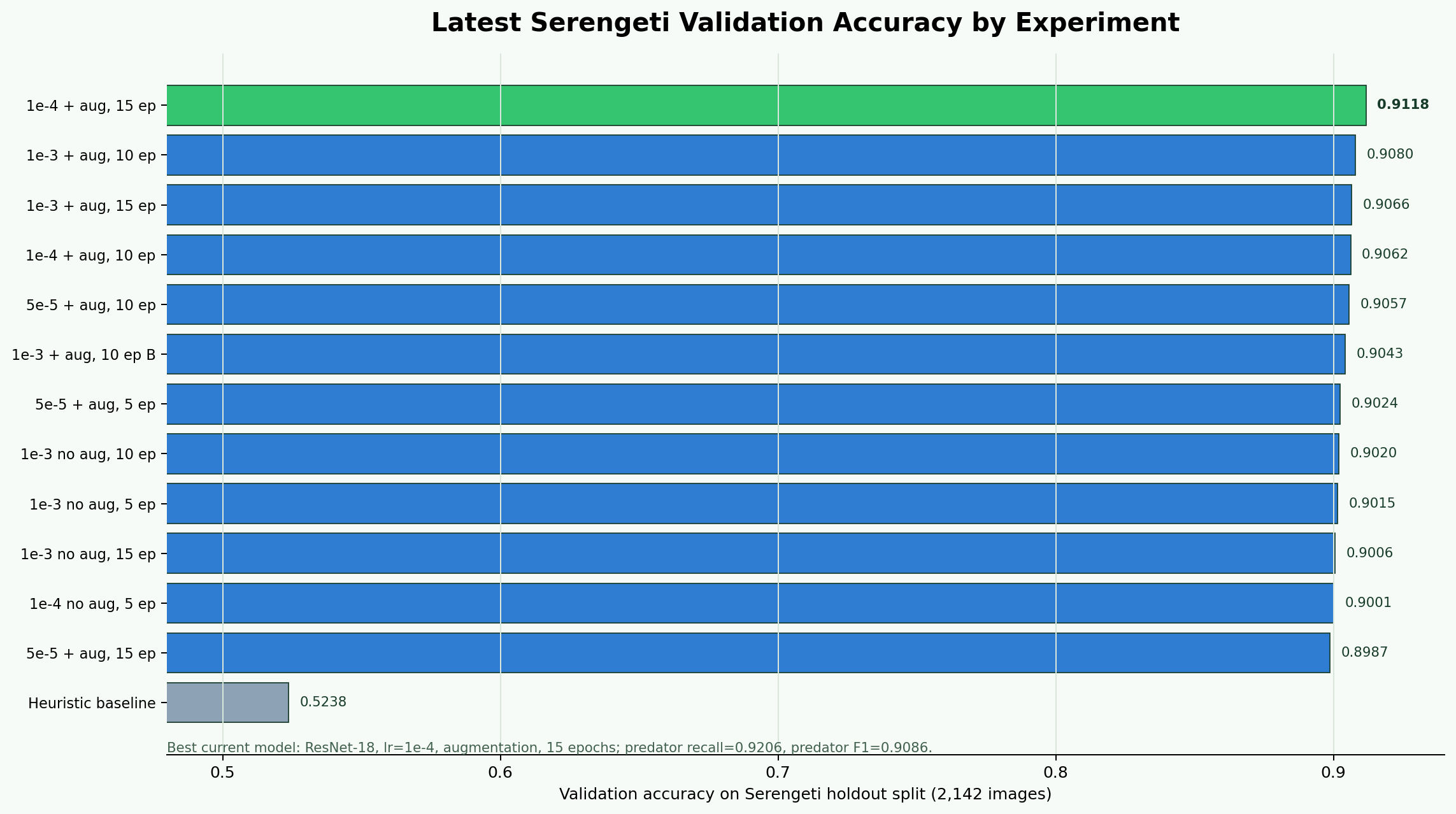

1e-4 the best accuracy run

| Experiment | Val Accuracy | Pred Recall | Pred F1 | LR | Epochs |

|---|---|---|---|---|---|

| ResNet-18 LR=1e-4 + aug | 0.9118 | 0.9206 | 0.9086 | 1e-4 | 15 |

| ResNet-18 LR=1e-3 + aug | 0.9080 | 0.9020 | 0.9033 | 1e-3 | 10 |

| ResNet-18 LR=1e-3 + aug | 0.9066 | 0.9167 | 0.9034 | 1e-3 | 15 |

| ResNet-18 LR=1e-4 + aug | 0.9062 | 0.8990 | 0.9012 | 1e-4 | 10 |

| ResNet-18 LR=5e-5 + aug | 0.8987 | 0.8569 | 0.8896 | 5e-5 | 15 |

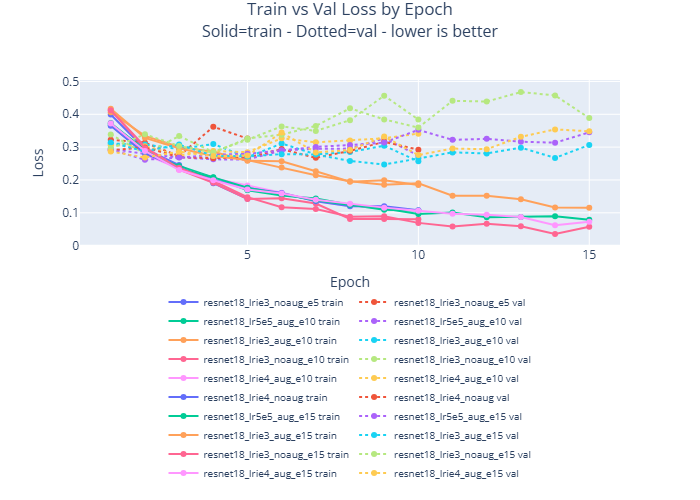

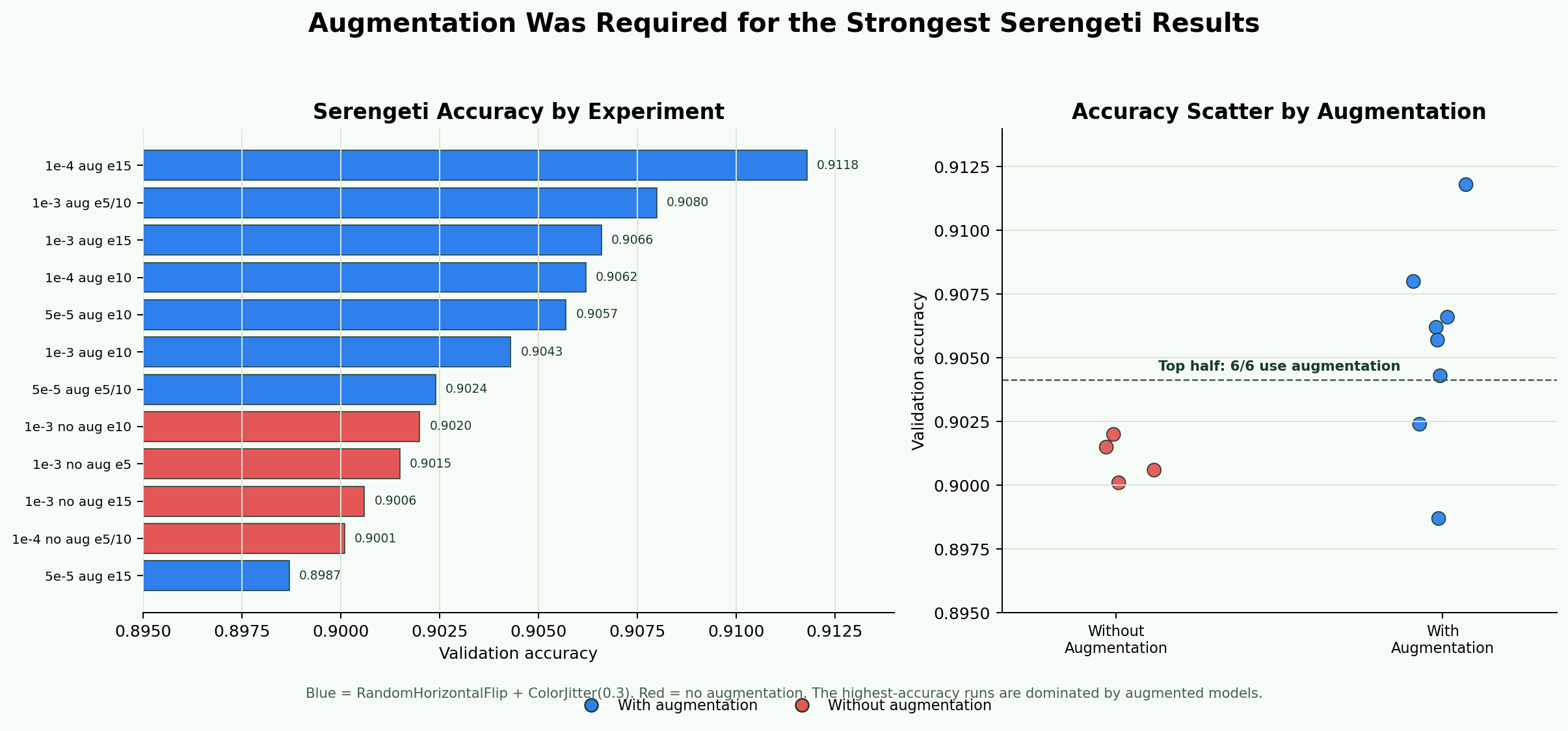

1e-4 + augmentation + 15 epochs was the strongest run, so we used it as the best real-image checkpoint before adapting to Path of Titans.

RandomHorizontalFlip and ColorJitter(0.3).0.9118 accuracy, 0.9206 predator recall, 0.9086 predator F1.

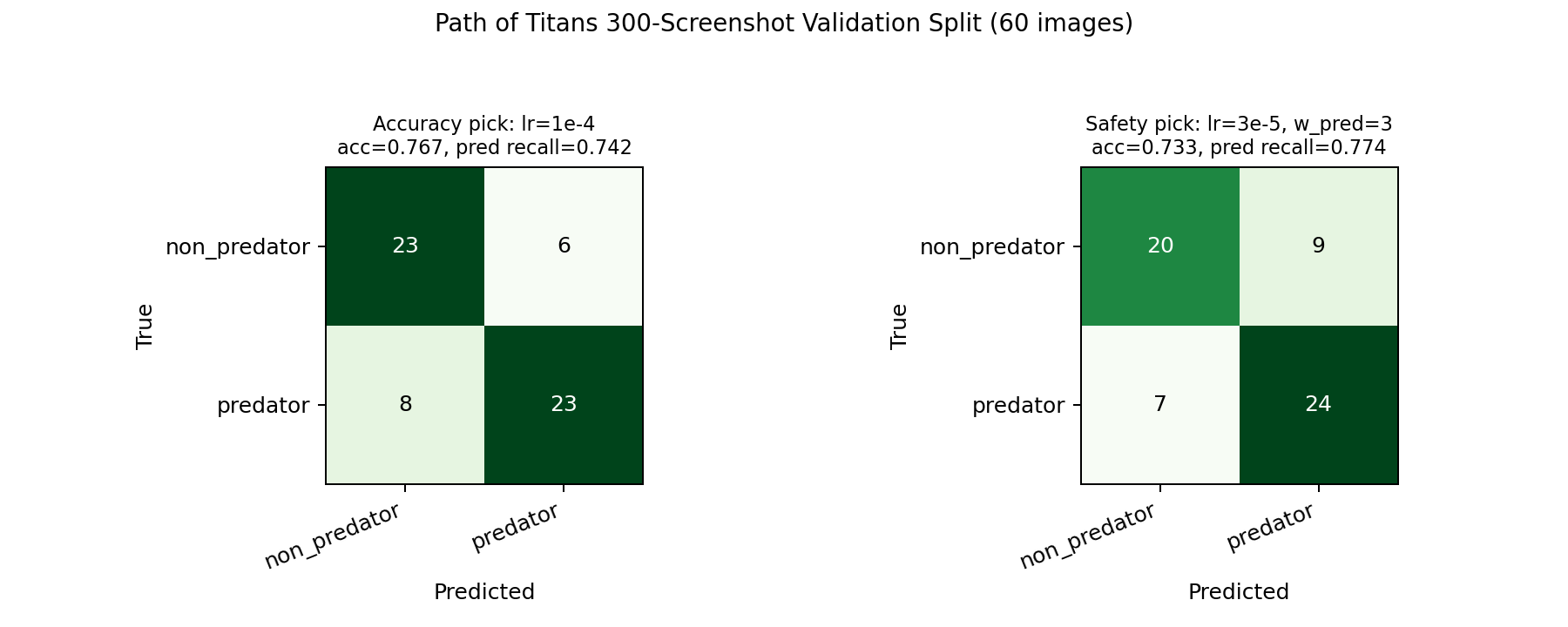

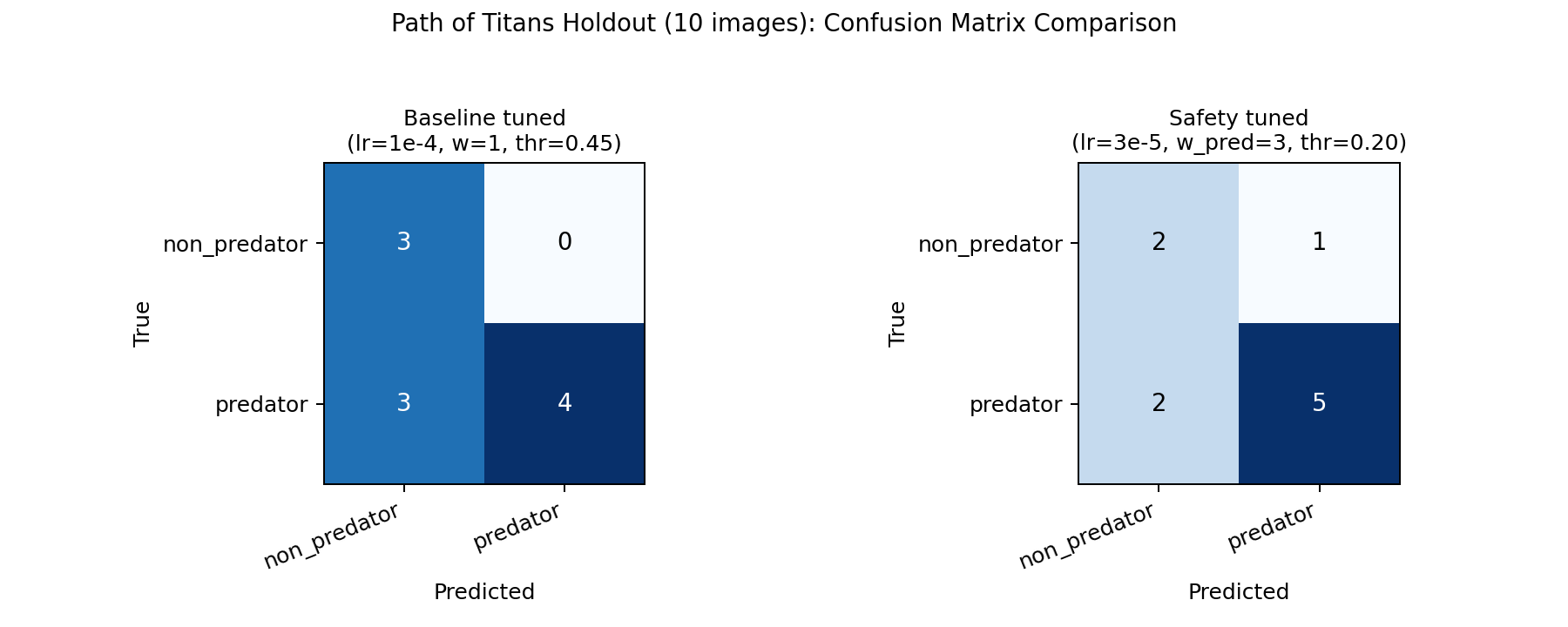

1e-4 is still the accuracy pick: it was strongest on Serengeti and on the 300-screenshot PoT validation split. But on the 10-image game holdout, class weighting alone did not fix false negatives at the default threshold. We switched from "best accuracy checkpoint" to a safety operating point: lr=3e-5, predator class weight 3.0, and threshold 0.20, because the live agent should over-warn rather than miss predators.

0.20 on the weighted run moved recall from 0.571 to 0.714 on the tiny holdout, trading one extra false alarm for fewer missed threats.1e-4 + aug + 15 epochs, 0.9118 validation accuracy and 0.9206 predator recall.1e-4 remained the best 60-image validation accuracy model at 0.7667.lr=3e-5, predator weight 3.0, threshold 0.20.0.571 to 0.714 by prioritizing fewer missed predators over raw accuracy.1e-4 + aug + 15 epochs; predator recall 0.9206, predator F1 0.9086.lr=1e-4, 15 epochs; split was 240 train / 60 validation.lr=3e-5, predator weight 3.0, threshold 0.20; accuracy 0.70, precision 0.833.Screen recording from pages/paleo-presentation/demo/. Use the player controls to play or scrub.

Opens in a new tab. Paths are relative to the site pages/ root.